19) Neural networks can self-organize via competition (Grossberg – 1978, Kohonen – 1981)

19) Neural networks can self-organize via competition (Grossberg – 1978, Kohonen – 1981)

Hubel and Wiesel's work with the development of cortical columns (see previous post) hinted at it, but it wasn't until Grossberg and Kohonen built computational architectures explicitly exploring competition that its importance was made clear.

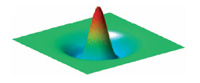

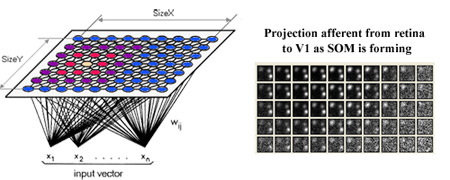

Grossberg was the first to illustrate the possibility of self-organization via competition. Several years later Kohonen created what is now termed a Kohonen network, or self-organizing map (SOM). This kind of network is composed of layers of neuron-like units connected with local excitation and, just outside that excitation, local inhibition. The above figure illustrates this 'Mexican hat' function in three dimensions, while the figure below represents it in two dimensions along with its inputs.

These networks, which implement Hebbian learning, will spontaneously organize into topographic maps.

For instance, line orientations that are similar to each other will tend to be represented by nearby neural units, while less similar line orientations will tend to be represented by more distant neural units. This occurs even when the map starts out with random synaptic weights. Also, this spontaneous organization will occur for even very complex stimuli (e.g., faces) as long as there are spatio-temporal regularities in the inputs.

Another interesting feature of Kohonen networks is that the more frequent input patterns are represented by larger areas in the map. This is consistent with findings in cortex, where more frequently used representations have larger cortical areas dedicated to them.

There are several computational advantages to having local competition between similar stimuli, which SOMs can provide.

One such advantage is that local competition can increase specificity of the representation by ruling out close alternatives via lateral inhibition. Using this computational trick, the retina can discern visual details better at the edges of objects (due to contrast enhancement).

Another computational advantage is enhancement of what's behaviorally important relative to what isn't. This works on a short time-scale with attention (what's not important is inhibited), and on a longer time-scale with increases in representational space in the map with repeated use, which increases representational resolution (e.g., the hand representation in the somatosensory homonculus).

You can explore SOMs using Topographica, a computational modeling environment for simulating topographic maps in cortex. Of special interest here is the SOM tutorial available at topographica.org.

Implication: The mind, largely governed by reward-seeking behavior on a continuum between controlled and automatic processing, is implemented in an electro-chemical organ with distributed and modular function consisting of excitatory and inhibitory neurons communicating via ion-induced action potentials over convergent and divergent synaptic connections strengthened by correlated activity. The cortex, a part of that organ organized via local competition and composed of functional column units whose spatial dedication determines representational resolution, is composed of many specialized regions involved in perception (e.g., touch: parietal, vision: occipital), action (e.g., frontal), and memory (e.g.,short-term: prefrontal, long-term: temporal),which depend on inter-regional communication for functional integration.

[This post is part of a series chronicling history's top brain computation insights (see the first of the series for a detailed description). See the history category archive to see all of the entries thus far.]

-MC