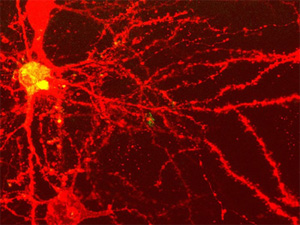

In 1992 Rizzolatti and his colleagues found a special kind of neuron in the premotor cortex of monkeys (Di Pellegrino et al., 1992).

In 1992 Rizzolatti and his colleagues found a special kind of neuron in the premotor cortex of monkeys (Di Pellegrino et al., 1992).

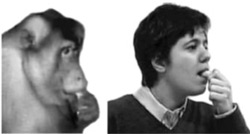

These neurons, which respond to perceiving an action whether it’s performed by the observed monkey or a different monkey (or person) it’s watching, are called mirror neurons

Many neuroscientists, such as V. S. Ramachandran, have seized upon mirror neurons as a potential explanatory ‘holy grail’ of human capabilities such as imitation, empathy, and language. However, to date there are no adequate models explaining exactly how such neurons would provide such amazing capabilities.

Perhaps related to the lack of any clear functional model, mirror neurons have another major problem: Their functional definition is too broad.

Typically, mirror neurons are defined as cells that respond selectively to an action both when the subject performs it and when that subject observes another performing it. A basic assumption is that any such neuron reflects a correspondence between self and other, and that such a correspondence can turn an observation into imitation (or empathy, or language).

However, there are several other reasons a neuron might respond both when an action is performed and observed.

First, there may be an abstract concept (e.g., open hand), which is involved in but not necessary for the action, the observation of the action, or any potential imitation of the action.

Next, there may be a purely sensory representation (e.g., of hands / objects opening) which becomes involved independently of action by an agent.

Finally, a neuron may respond to another subject’s action not because it is performing a mapping between self and other but because the other’s action is a cue to load up the same action plan. In this case the ‘mirror’ mapping is performed by another set of neurons, and this neuron is simply reflecting the action plan, regardless of where the idea to load that plan originated. For instance, a tasty piece of food may cause that neuron to fire because the same motor plan is loaded in anticipation of grasping it.

It is clear that mirror neurons, of the type first described by Rizzolati et al., exist (how else could imitation occur?). However, the practical definition for these neurons is too broad.

How might we improve the definition of mirror neurons? Possibly by verifying that a given cell (or population of cells) responds only while observing a given action and while carrying out that same action.

Alternatively, subtractive methods may be more effective at defining mirror neurons than response properties. For instance, removing a mirror neuron population should make imitation less accurate or impossible. Using this kind of method avoids the possibility that a neuron could respond like a mirror neuron but not actually contribute to behavior thought to depend on mirror neurons.

Of course, the best approach would involve both observing response properties and using controlled lesions. Even better would be to do this with human mirror neurons using less invasive techniques (e.g., fMRI, MEG, TMS), since we are ultimately interested in how mirror neurons contribute to higher-level behaviors most developed in homo sapiens, such as imitation, empathy, and language.

-MC

Image from The Phineas Gage Fan Club (originally from Ferrari et al. (2003)).

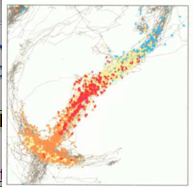

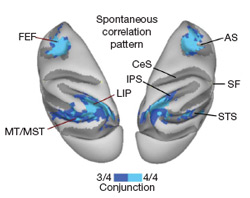

Functional magnetic resonance imaging (fMRI), a method for safely measuring brain activity, has been around for about 15 years. Within the last 10 of those years a revolutionary, if mysterious, method has been developing using the technology. This method, resting state functional connectivity (rs-fcMRI), has recently gained popularity for its putative ability to measure how brain regions interact innately (outside of any particular task context).

Functional magnetic resonance imaging (fMRI), a method for safely measuring brain activity, has been around for about 15 years. Within the last 10 of those years a revolutionary, if mysterious, method has been developing using the technology. This method, resting state functional connectivity (rs-fcMRI), has recently gained popularity for its putative ability to measure how brain regions interact innately (outside of any particular task context).

I recently published my first primary-author research study (

I recently published my first primary-author research study ( In 1992 Rizzolatti and his colleagues found a special kind of neuron in the premotor cortex of monkeys (Di Pellegrino et al., 1992).

In 1992 Rizzolatti and his colleagues found a special kind of neuron in the premotor cortex of monkeys (Di Pellegrino et al., 1992).