…from a knee-jerk reaction to its immediate input?

Although one of the first things that a Neuroscience student learns about is "reflex reactions" such as the patellar reflex (also known as the knee-jerk reflex), the cognitive neuroscientist is interested in the kind of processing that might occur between inputs and outputs in mappings that are not so direct as the knee-jerk reaction.

An example of a system which is a step up from the knee-jerk reflex is in the reflexes of the sea slug named "Aplysia". Unlike the patellar reflex, Aplysia's gill and siphon retraction reflexes seem to "habituate" over time — the original input-output mappings are overridden by being repeatedly stimulated. This is a simple form of memory, but no real "processing" can be said to go on there.

Specifically, cognitive neuroscientists are interested in mappings where "processing" seems to occur before the output decision is made. As MC pointed out earlier, the opportunity for memory (past experience) to affect those mappings is probably important for "free will".

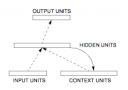

But how can past experience affect future mappings in interesting ways? One answer to this question appeared in the year 1990, which began a new era in experimentation with neural network models capable of indirect input-output mappings. In that year, Elman (inpired by Jordan's 1986 work) demonstrated the Simple Recurrent Network in his paper "Finding Structure in Time". The concept behind this network is shown in the picture associated with this entry.

The basic idea of the Simple Recurrent Network is that as information comes in (through the input units), an on-line memory of that information is preserved and recirculated (through the "context" units). Together, the input and context units both influence the hidden layer which can trigger responses in the output layer. This means that the immediate output of the network is dependent not only on the current input, but also on the inputs that came before it.

The most interesting aspect of the Simple Recurrent Network, however, is that the connections among all the individual units in the network change depending on what the modeler requires the network to output. The network learns to preserve information in the context layer loops so that it can correctly produce the desired output. For example, if the task of the network is to remember the second word in a sentence, it will amplify or maintain the second word when it comes in, while ignoring the intervening words, so that at the end of the sentence it outputs the target word.

Although this network cannot be said to have "free" will — especially because of the way its connections are forcefully trained — its operation can hint at the type of phenomena researchers should seek in trying to understand cognition in neural systems.

-PL