Modern technologies allow eye movements to be used as a tool for studying language processing during tasks such as natural reading. Saccadic eye movements during reading turn out to be highly sensitive to a number of linguistic variables. A number of computational models of eye movement control have been developed to explain how these variables affect eye movements. Although these models have focused on relatively low-level cognitive, perceptual and motor variables, there has been a concerted effort in the past few years (spurred by psycholinguists) to extend these computational models to syntactic processing.

During a modeling symposium at ECEM2007 (the 14th European Conference on Eye Movements), Dr. Ronan Reilly presented a first attempt to take syntax into account in his eye-movement control model (GLENMORE; Reilly & Radach, Cognitive Systems Research, 2006).

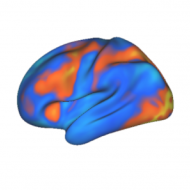

Eye-tracking studies have shown that words that are frequent and predictable are looked at (fixated) for less time, and depending on the age of the reader, skipped more often. Longer words are more likely to receive multiple fixations. The distribution of fixations on

cheap nfl jerseys

wholesale jerseys

wholesale nfl jerseys

wholesale jerseys from china

wholesale nfl jerseys from china

Cheap Jerseys from china

Cheap Jerseys china

Cheap Jerseys free shipping

Cheap NFL Jerseys,Wholesale Jerseys From China Free Shipping

cheapjerseyss

http://www.cheapjerseyss.top

words are skewed to the left of the word’s center.

All of these patterns are captured quite well by models of eye-movement control (e.g., SWIFT and E-Z Reader, to name just a couple). At this point, the field has a wide range of models with differing underlying assumptions about how how perceptual, motor and cognitive processes interact to control eye movements during reading. However, few if any of these models show the effects of syntactic processing on eye movements that have been observed by psycholinguists (e.g., ‘garden-path’ and ‘clause wrap-up’ effects).

In line with the connectionist style of the GLENMORE model, the grammar extension Dr. Reilly implemented was in the form of a Simple Recurrent Network (SRN). As discussed briefly in an earlier post on backpropogation of error, SRNs have the ability to derive grammatical categories by simply being trained to predict the next word in a sequence (Elman, Cognitive Science, 1990). Thus SRNs are a relatively assumption-free approach to developing/building a grammar.

Although the results presented at the ECEM symposium were preliminary (and as of yet, unpublished), they were impressive. The SRN had been successfully trained on a large corpus of text (larger than any corpus I have yet seen) and had developed grammatical categories from the tokens. The SRN was interfaced to the GLENMORE model so that more syntactically predictable words and structures were processed more quickly. Dr. Reilly found that eye movements were generally affected in ways that were consistent with patterns seen in human participants.

Another perspective on Dr. Reilly’s work is that it may be an embodied test of the SRN’s grammar-learning capabilities. I should point out that SRNs have received criticism from linguists as a model for grammar learning because they “don’t [appear to (my addition)] explicitly represent rules”; however, many cognitive scientists are still not convinced that there are grammar rules in human neural networks (e.g., see the exchange between Gary Marcus, Jay McClelland and Dave Plaut in Trends in Cognitive Sciences, 1999).

In conclusion, it’s exciting to see neural networks applied to language processing in a way that will create testable predictions (e.g., on measurable behaviors such as eye movements). Further presentations and publications on this topic will be highly anticipated.

-PL